Enterprise AI Weekly #35 Mini

Is Vibe commoditised, Claude Haiku 4.5 arrives, Microsoft's in-house image generator, Jon Gray on AI, Microsoft's new agent framework, will Google cure cancer, Apple announces the M5 and MCP devtools

Welcome to Enterprise AI Weekly #35 Mini

Welcome to Enterprise AI Weekly, published by me, Paul O’Brien, Group Chief AI Officer and Global Solutions CTO at Davies. This is a short, easy-to-read look at what’s happening in the world of AI - and what it means for business.

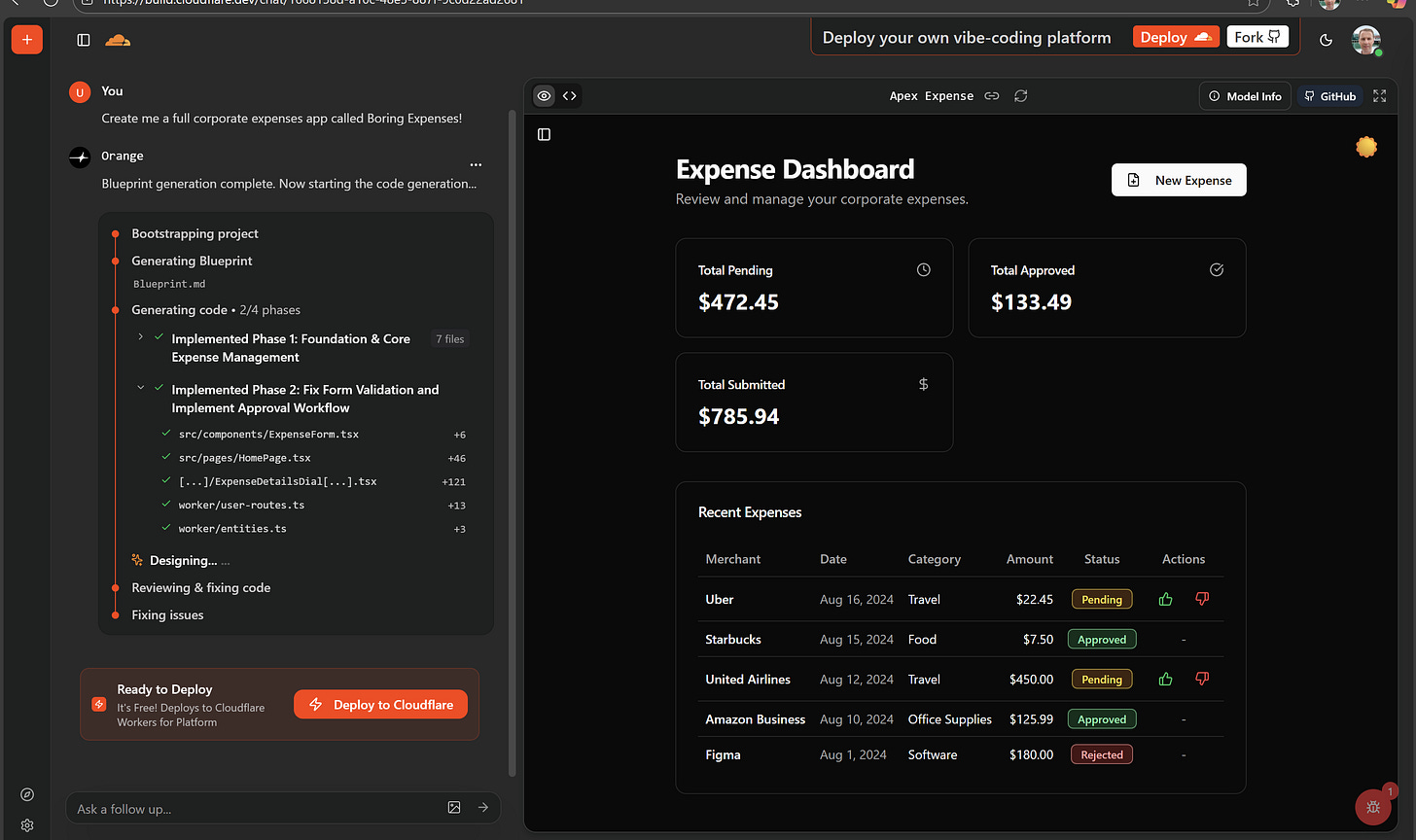

Alongside this mini main newsletter, I spend an hour or so each week experimenting with AI development (what I call Vibe Coding). Our first demo app, Boring Expenses, shows what can be built with AI tools in just one working day. If you haven’t seen it yet, check out the Boring demo. 😊

If you’re reading this for the first time, you can read previous posts at the Enterprise AI Weekly Substack page. Please share the link and encourage others who might find it interesting to sign up.

Are Vibe Coding tools becoming commoditised?

In building Boring Expenses, I’ve tested several AI development tools like Bolt, Lovable and GitHub Spark - and there are plenty more out there. It feels like these tools are starting to blur together, offering similar ways to build apps faster with AI’s help.

Now Cloudflare has jumped in with VibeSDK, a free, open-source platform that lets anyone spin up their own “vibe coding” setup in one click. It’s an exciting move that could make AI-assisted development even more accessible.

Now, onto the rest of the news. Enjoy EAIW #35!

1. Anthropic launches Claude Haiku 4.5

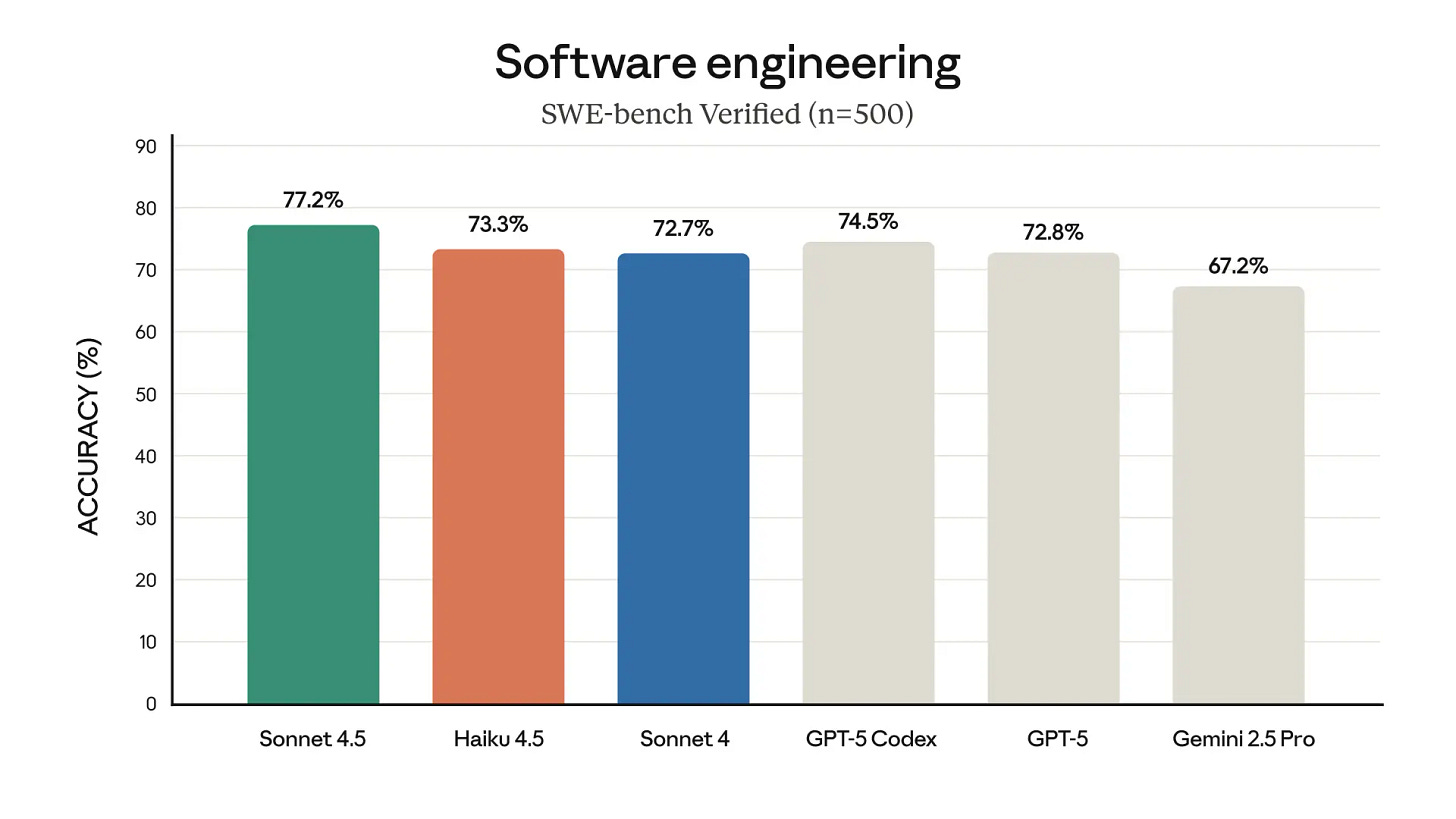

Anthropic has released Claude Haiku 4.5, a small but powerful AI model that delivers near-frontier performance at a fraction of the cost and latency of its larger peers. It achieves around 90% of the coding ability of the flagship Claude Sonnet 4.5 while running over twice as fast and at one-third the price. This makes it ideal for real-time applications like customer support, coding assistants, and embedded agents. Importantly, Anthropic reports improved safety performance, earning Haiku 4.5 a lower-risk AI Safety Level 2 classification - broadening its availability via enterprise APIs, Amazon Bedrock, and Google Vertex AI.

At just $1 per million input tokens and $5 per million output, Haiku 4.5 highlights a growing shift toward efficiency and orchestration in enterprise AI. Anthropic suggests pairing one premium model (like Sonnet 4.5) as a “planner” with multiple Haiku instances as fast “doers,” an approach that balances cost with speed and reliability. Though rivals such as DeepSeek 3.2 Exp may offer even better value, Haiku 4.5 exemplifies the new “speed frontier” in AI - enabling faster prototyping, scalable agentic systems, and tighter feedback loops between people and machines.

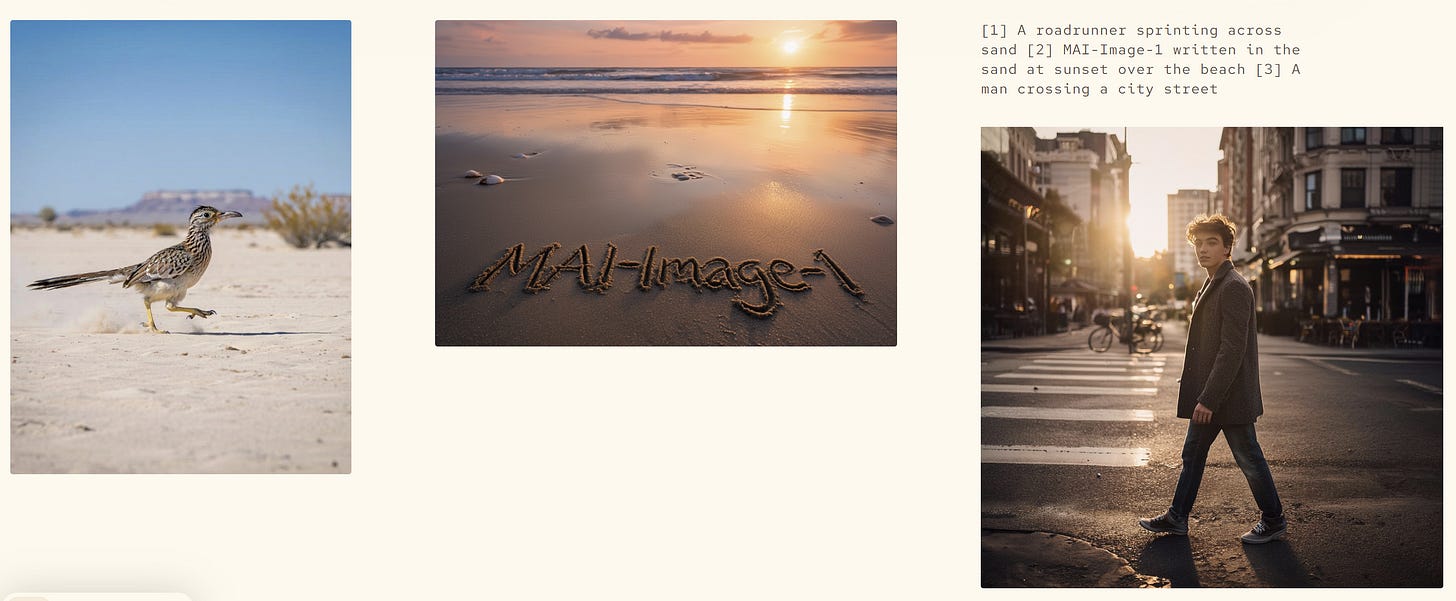

2. Microsoft introduces their first AI image generator

Microsoft AI (MAI) has launched MAI-Image-1, its first in-house image generation model, which has quickly entered the top 10 on the LMArena leaderboard. It produces fast, photorealistic, and diverse visuals, aimed at speeding up creative workflows for professionals and enterprises alike. This marks another step in Microsoft’s shift towards building and owning its own AI models - following MAI-Voice-1 for speech and MAI-1-preview for text - reducing reliance on external partners like OpenAI and strengthening control over tools such as Copilot and Bing Image Creator. Early testers highlight MAI-Image-1’s quality, speed, and realism, especially in complex or dynamic visual scenes, making it ideal for design and marketing teams working to tight deadlines.

The model also reflects Microsoft’s careful stance on responsible AI deployment. By testing MAI-Image-1 in controlled environments before broad release, Microsoft ensures strong reliability, safety, and data privacy standards for enterprise use. Owning its technology stack allows faster adaptation to customer needs and tighter integration across its product suite. For enterprises, this combination of speed, safety, and in-house governance positions MAI-Image-1 as both a creative accelerator and a compliance-friendly AI tool - signalling Microsoft’s growing confidence and independence in the generative AI space.

3. Blackstone’s Jon Gray talks on the economy and AI

Blackstone President Jon Gray used his September 2025 CIO Symposium keynote to deliver a clear message: while the global economy remains uncertain, AI has become the defining force in modern investment. He noted that Blackstone’s portfolio companies are showing strong revenue and margin growth, suggesting fears of recession are overblown. With inflation cooling and interest rates likely to ease, Gray anticipates a rebound in deal-making and IPOs heading into 2026 - driven by the productivity and capital efficiency gains unlocked by AI adoption across industries.

The core of Gray’s message was the “picks and shovels” opportunity: investing in the physical backbone of the AI revolution - data centres, power infrastructure, semiconductors, and grids - rather than just the flashy applications. These “boring” assets, he argued, could prove the most profitable of all. For enterprises, the takeaway is twofold: AI is not only reshaping business models but redefining where capital flows. The challenge is to seize the opportunity responsibly - balancing innovation with resilience and ensuring AI enthusiasm doesn’t outpace sound strategy or risk management.

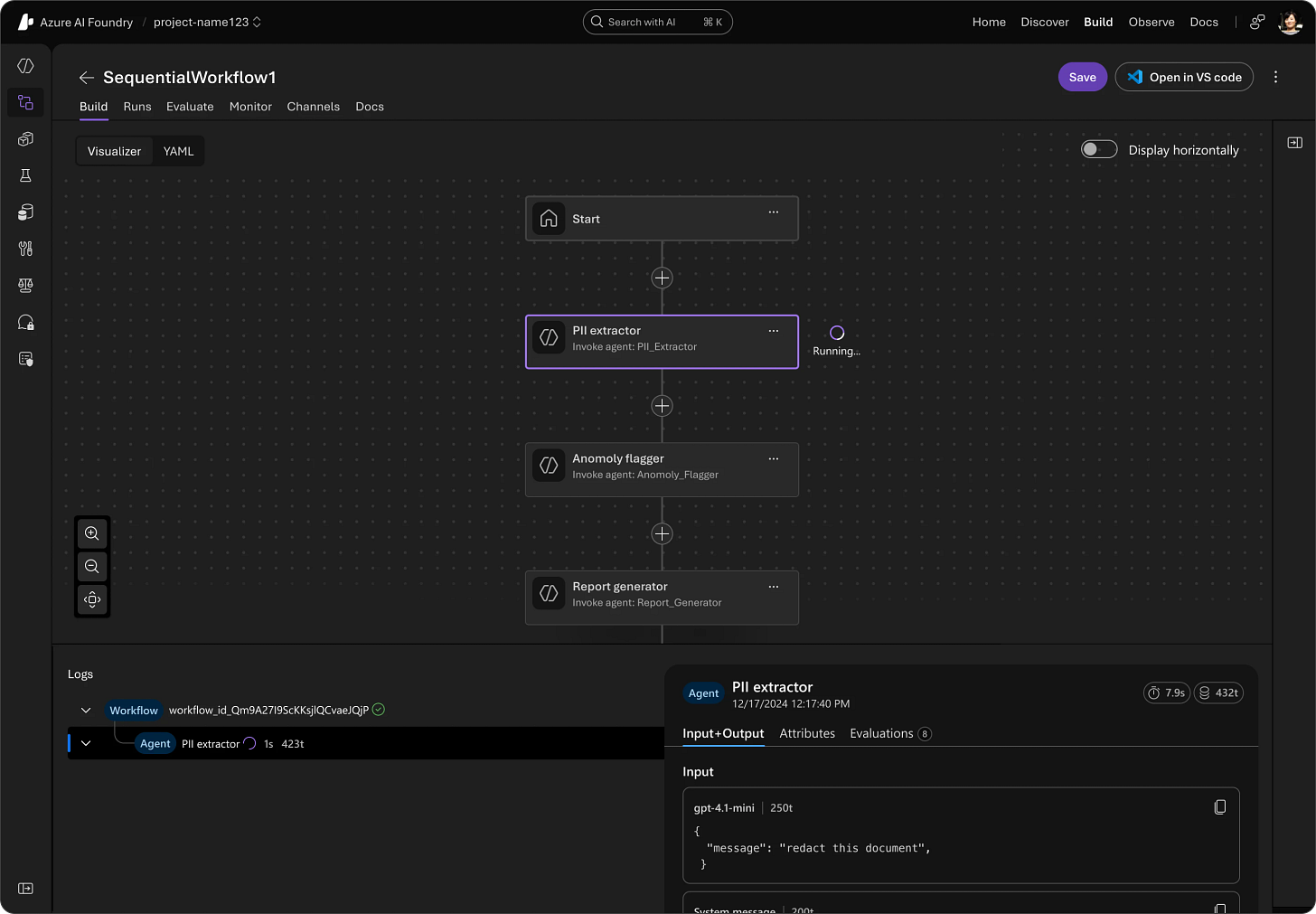

4. Microsoft’s ‘Agent Framework’ clarifies the agentic stack

Microsoft has released the Microsoft Agent Framework, a new open-source SDK and runtime that unifies its previous agent technologies - Semantic Kernel and AutoGen - into a single, enterprise-ready platform for building and managing multi-agent AI systems. Supporting both .NET and Python, it brings together orchestration, security, observability, and compliance features to simplify complex agentic workflows. The framework integrates open standards like MCP and A2A, offers graph-based orchestration, and includes enterprise essentials such as OpenTelemetry observability, Microsoft Entra ID security, and human-in-the-loop governance. Early adopters like KPMG, BMW, and Fujitsu are already using it in production.

Now part of Azure AI Foundry, the framework helps organisations design, deploy, and govern multi-agent systems more effectively. Foundry adds native tools for observability, compliance, and workflow orchestration - supporting long-running, multi-step business processes such as onboarding, financial operations, and supply chain automation. Together, the Microsoft Agent Framework and Azure AI Foundry create a “factory for agents,” blending open-source flexibility with Microsoft’s enterprise-grade reliability. For organisations invested in the Microsoft ecosystem, this offers a clear, compliant path to scaling secure, interoperable, and production-ready agentic AI solutions.

5. AI might actually cure cancer

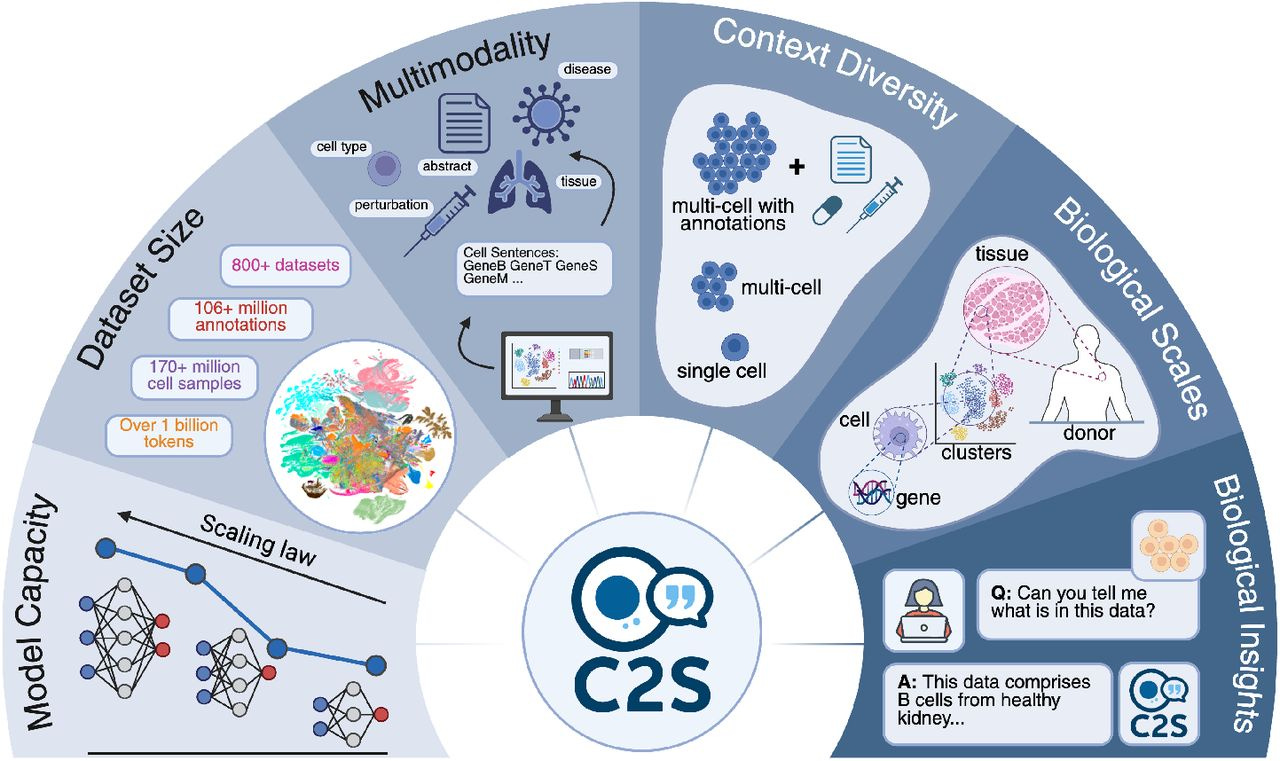

AI might genuinely be on the path to curing cancer. In a breakthrough collaboration between Google DeepMind and Yale University, researchers unveiled Cell2Sentence-Scale 27B (C2S-Scale), an AI model that has generated and validated a new biological hypothesis with major therapeutic potential. Built on Google’s open Gemma architecture, the model discovered an immune pathway that could help “cold” tumours become “hot” - making them far more responsive to treatments like immunotherapy. It even pinpointed a promising drug combination (silmitasertib and low-dose interferon) that boosted antigen presentation by 50%, allowing the immune system to better detect and attack cancer cells.

This marks a major moment in scientific AI, where models aren’t just analysing data but helping to drive real-world discovery. DeepMind’s work hints at a new kind of “AI co-scientist” - systems that propose, test, and validate hypotheses alongside human researchers. If this approach scales, it could transform how we develop medicines, turning the idea of algorithmic biology from fiction into fact. For the first time, AI may be learning to understand - and rewrite - the biological language of life itself.

POB’s closing thoughts

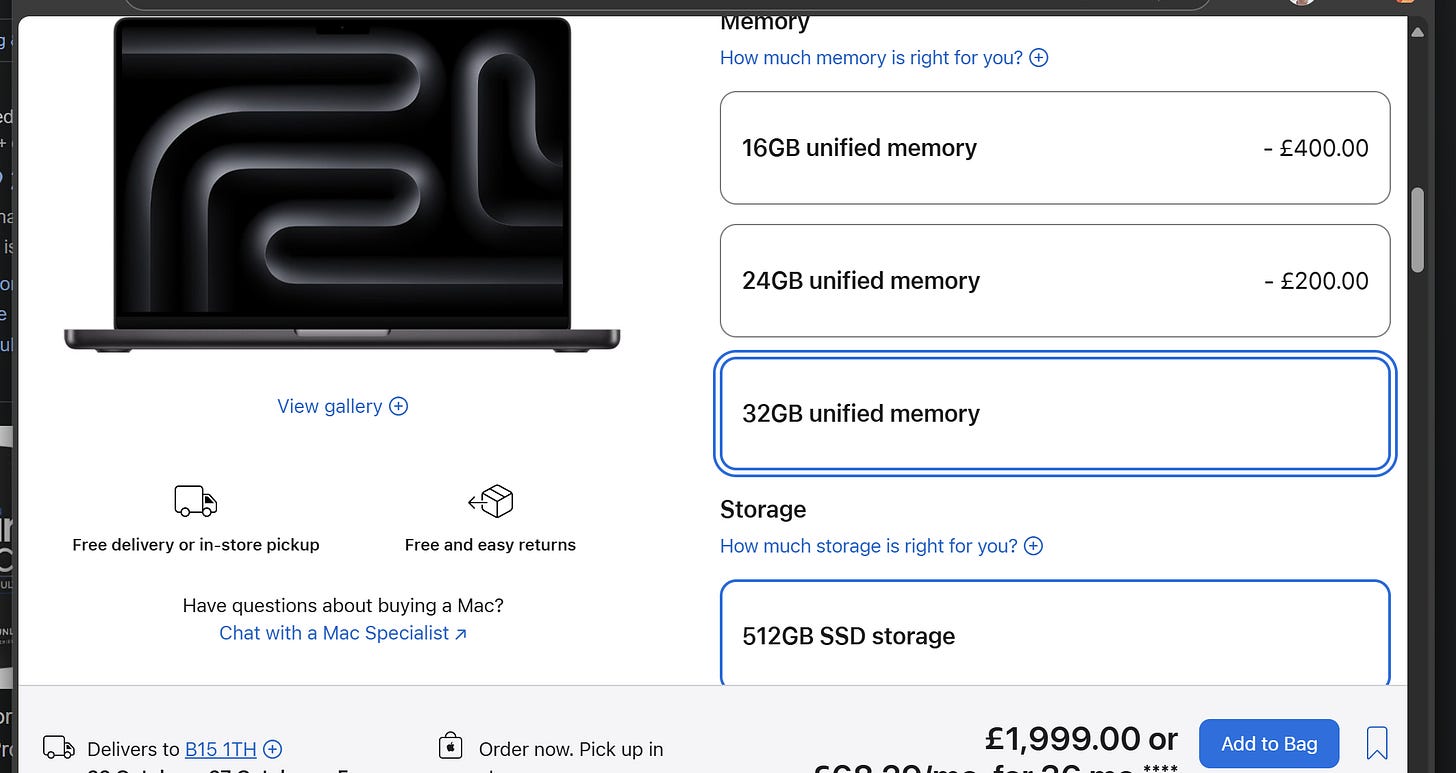

I’m travelling for work next week and, of course, wondering how to keep using AI on the plane. My personal laptop setup isn’t ideal for running local LLMs - my Intel Core Ultra 9 Processor 285K with an Intel Arc 140T GPU is decent but not tuned for that, and my work laptop prioritises portability over power. So, I’ll be hoping for good Wi-Fi! Apple’s just unveiled its M5-powered MacBook Pros, boasting over 4× the AI GPU performance of the M4, plus Neural Accelerators and higher memory bandwidth. The catch? RAM costs a fortune - 32GB adds £400, and top-tier M4 Max models stretch close to £4,000. For now, my trusty Honor Magicbook 14 Pro (32GB/1TB for under £1,000) stays put.

A new report from Epoch AI on behalf of Google DeepMind also caught my eye this week, predicting AI compute demand will grow 1,000× by 2030, with frontier datacentres using up to 1.2% of global electricity. Despite this, AI is expected to play an ever larger role in science, software, and engineering. And for the coders: Google has released a Chrome DevTools MCP server that lets AI coding assistants directly debug web pages inside Chrome - improving accuracy and insight for performance tuning. Very cool.

Thanks for reading, I hope you have a great weekend! 👍

I’d love to hear your feedback on whether you enjoy reading the Substack, find it useful, or if you would like to see something different in a future post. What AI topics are you most interested in for future explainers? Are there any specific AI tools or developments you'd like to see covered? Remember, if you have any questions around this Substack, AI or how Davies can help your business, you can reply to this message to reach me directly.

Finally, remember that while I may mention interesting new services in this post, you shouldn’t upload or enter business data into any external web service or application without ensuring it has been explicitly approved for use.

Disclaimer: The views and opinions expressed in this post are my own and do not necessarily reflect those of my employer.